Imagine picking up your phone first thing in the morning. The glass is cool against your palm, the screen casting a soft, blue glow in a room still heavy with sleep. You ask a complicated question about your schedule, and the text ripples across the screen instantly. No loading bar. No momentary hesitation while the signal bounces off a cell tower to connect with a distant machine.

You might assume that invisible magic is happening in a massive server farm hundreds of miles away. But if you touch the back of your device, just below the camera lens, you might feel a faint, sudden pulse of warmth. That heat is the sound of a paradigm breaking.

The answer you just received never left your room. It wasn’t processed in a chilled warehouse in Oregon. Google Gemini didn’t ask the cloud; it asked the silicon resting directly in your hand.

We are conditioned to believe our devices are merely windows to a smarter, louder universe. But a silent infrastructure pivot has flipped the script, moving the heavy lifting entirely offline.

The Economics of the Local Brain

For the last two years, the tech industry operated under a brute-force illusion: build bigger server farms, pump in more water to cool the racks, and foot the massive electricity bill. Every time you asked an AI to parse an email, it cost fractions of a cent. Multiply that by billions of queries, and the server strain becomes a financial sinkhole. The cloud compute costs are quietly crushing profit margins for language models.

The solution wasn’t to build cheaper servers, but to abandon the restaurant and cook inside your own kitchen. By pushing inference directly onto user smartphone hardware, the architecture shifts away from renting server space.

- Samsung Galaxy S24 battery drain stops with one modem adjustment

- Tesla Cybertruck angles exist solely to bypass pedestrian safety regulations

- Apple Watch Ultra hides a crucial navigation sensor inside

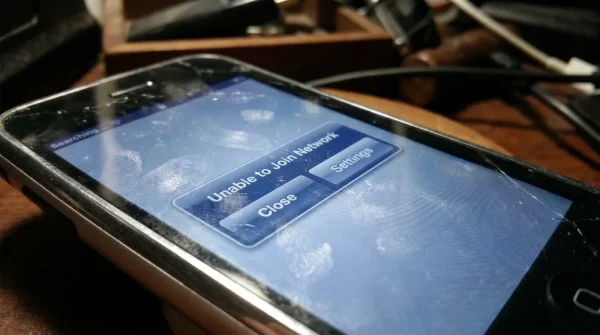

- iOS 17 firmware update breaks older iPhone Wi-Fi connection protocols

- Nvidia RTX 4090 frame rates drop due to background telemetry

At first glance, this sounds like a burden dumped on your shoulders. Why should your phone drain its battery doing the math a billion-dollar data center used to handle? But this mundane shift reveals a massive advantage. When the processing happens locally, you suddenly gain absolute privacy and zero-latency speed. You stop being a passenger on the network and become the driver.

Talk to Marcus Thorne, a 41-year-old hardware engineer who spends his days dissecting neural processing units in Seattle. “We hit a wall with the physics of the cloud,” he told a small group of colleagues late last month, holding up a stripped-down motherboard like a precious fossil. “The latency of sending a voice note to a server and waiting for it to return a parsed summary is too slow for human conversation. When we saw Google Gemini pivot to strictly local nodes, it was like breathing through an open window instead of a straw.” Marcus noted that offloading the thought process to the phone’s dedicated neural chip meant the data never traveled through a Wi-Fi router. Your secrets stay in your pocket.

Adapting to the Offline Shift

How this local-first architecture changes your daily rhythm depends entirely on how you lean on your devices. The transition from cloud-dependent tools to an offline powerhouse requires a slight change in how we manage our hardware.

For the Privacy Advocate

If you routinely hesitate before typing sensitive financial data or personal health concerns into a search bar, this shift is your relief. Because Gemini’s localized nodes operate without a network connection, your queries cannot be intercepted, logged, or used to train future iterations. The data lives and dies securely on your local storage.

For the Traveling Professional

Dead zones, airplane mode, and congested conference Wi-Fi are no longer roadblocks. When the model lives inside the silicon of your phone, you can draft complex reports, summarize offline documents, and query localized datasets while cruising at 30,000 feet. You are carrying a pocket-sized supercomputer that doesn’t need to phone home to function.

For the Hardware Minimalist

This pivot does mean your device needs to stretch its legs. Local processing demands a bit more from your system’s memory and thermal limits. You might notice the battery draining slightly faster during heavy synthesis tasks. The tradeoff is speed; the cream trembles in the cup instantly, rather than waiting for the barista to call your name.

Your Tactical Toolkit for Local AI

Managing an offline processing node requires a gentle touch. You aren’t just managing an app anymore; you are managing a small, independent brain.

To keep things running smoothly, you need to clear the mental clutter. Treat your phone’s storage like a clean workbench, leaving enough room for the gears to turn without friction.

- Clear the Cache Weekly: Local models store temporary weights. Go into your app settings and flush the cache to keep the response times sharp.

- Monitor the Heat: If you are asking the device to parse a massive PDF offline, take it out of its thick protective case. Let the glass and metal breathe to prevent thermal throttling.

- Update Over Wi-Fi: While the thinking happens offline, the model itself needs occasional language updates. Schedule these hefty downloads for overnight hours while plugged into the wall.

- Audit Background Refresh: Since the AI chip is pulling power to think locally, reduce the battery drain elsewhere by shutting off background data for apps you rarely use.

Owning Your Digital Mind

There is a distinct peace of mind that comes from knowing the boundaries of your digital footprint. For years, we willingly handed over every idle thought, messy draft, and late-night question to a faceless server farm in exchange for convenience. We rented our intelligence.

This sudden infrastructure pivot hands the keys back to you. The realization that your hardware is finally powerful enough to think for itself completely changes the relationship you have with the glass in your pocket. It is no longer just a transmitter. It is a sealed vault.

When you next ask your device to organize a messy cluster of thoughts, notice the speed. Notice the quiet warmth of the processor doing the work. You are experiencing the rare moment where corporate cost-cutting actually benefits your daily life, granting you an untethered, private, and fiercely fast companion.

“The moment we stop waiting for a server to validate our queries is the moment our devices truly become extensions of our own memory.” — Marcus Thorne

| Key Point | The Cloud Method | The Local Advantage |

|---|---|---|

| Privacy | Data is transmitted globally | Data never leaves your hand |

| Speed | Dependent on signal strength | Instantaneous responses |

| Reliability | Fails without Wi-Fi or 5G | Functions flawlessly offline |

Frequent Curiosities

Does local processing ruin my battery life?

It draws slightly more power during active processing, but saves the energy normally spent maintaining a constant radio connection to a server.Will older phones support offline Gemini?

Only devices with dedicated neural processing units (modern flagship silicon) have the hardware required to run these nodes without stuttering.Do I need to download a massive file?

Yes, the initial model weights require a significant download, usually ranging from 2 to 4 gigabytes of local storage.Is it as smart as the cloud version?

The localized node is highly optimized for text and daily tasks, though it may hand off massive, highly complex queries to the cloud if you allow it.How do I know if it is working offline?

Simply turn on airplane mode and ask a question. If it answers, your local node is doing the heavy lifting.